37

NEEDS MOAR 69 FELLOW HUMAN

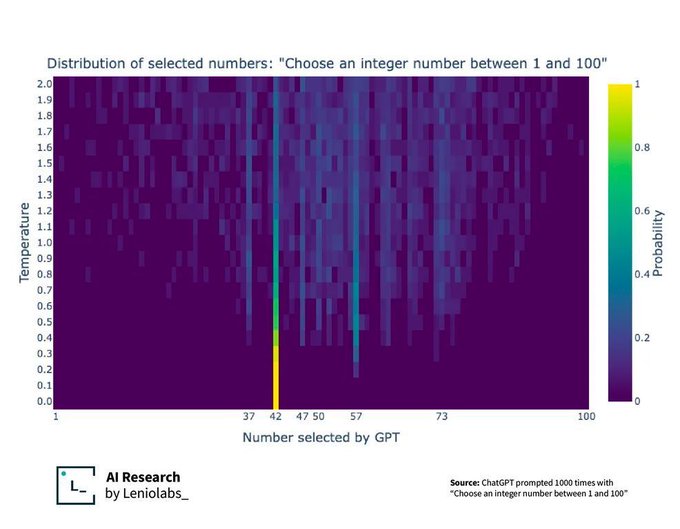

42, 47, and 50 all make sense to me. What’s the significance of 37, 57, and 73?

What's the y axis?

Temperature is basically how creative you want the AI to be. The lower the temperature, the more predictable (and repeatable) the response.

So what? It figured out The Answer, big whoop.

Get back to me when it figures out The Question.

I petition to rename ChatGPT to DeepThought based on these results.

I spent an afternoon once playing Infinite Craft, which uses some sort of LLM behind the scenes to do it's combinations.

At one point I got 007, and found 007+007 = 0014.

The maths gets wild though, and because it's been trained on text, it has no idea when it comes to combinations of numbers it hasn't seen before. I spent ages trying to get it to 69420 and just couldn't, although I could get 42069.

Technology

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.