view the rest of the comments

Games

Welcome to the largest gaming community on Lemmy! Discussion for all kinds of games. Video games, tabletop games, card games etc.

Rules

1. Submissions have to be related to games

Video games, tabletop, or otherwise. Posts not related to games will be deleted.

This community is focused on games, of all kinds. Any news item or discussion should be related to gaming in some way.

2. No bigotry or harassment, be civil

No bigotry, hardline stance. Try not to get too heated when entering into a discussion or debate.

We are here to talk and discuss about one of our passions, not fight or be exposed to hate. Posts or responses that are hateful will be deleted to keep the atmosphere good. If repeatedly violated, not only will the comment be deleted but a ban will be handed out as well. We judge each case individually.

3. No excessive self-promotion

Try to keep it to 10% self-promotion / 90% other stuff in your post history.

This is to prevent people from posting for the sole purpose of promoting their own website or social media account.

4. Stay on-topic; no memes, funny videos, giveaways, reposts, or low-effort posts

This community is mostly for discussion and news. Remember to search for the thing you're submitting before posting to see if it's already been posted.

We want to keep the quality of posts high. Therefore, memes, funny videos, low-effort posts and reposts are not allowed. We prohibit giveaways because we cannot be sure that the person holding the giveaway will actually do what they promise.

5. Mark Spoilers and NSFW

Make sure to mark your stuff or it may be removed.

No one wants to be spoiled. Therefore, always mark spoilers. Similarly mark NSFW, in case anyone is browsing in a public space or at work.

6. No linking to piracy

Don't share it here, there are other places to find it. Discussion of piracy is fine.

We don't want us moderators or the admins of lemmy.world to get in trouble for linking to piracy. Therefore, any link to piracy will be removed. Discussion of it is of course allowed.

Authorized Regular Threads

Related communities

PM a mod to add your own

Video games

Generic

- !gaming@Lemmy.world: Our sister community, focused on PC and console gaming. Meme are allowed.

- !photomode@feddit.uk: For all your screenshots needs, to share your love for games graphics.

- !vgmusic@lemmy.world: A community to share your love for video games music

- !freegames@feddit.uk: A community sharing free games giveaways.

Help and suggestions

By platform

By type

- !AutomationGames@lemmy.zip

- !Incremental_Games@incremental.social

- !LifeSimulation@lemmy.world

- !CityBuilders@sh.itjust.works

- !CozyGames@Lemmy.world

- !CRPG@lemmy.world

- !horror_games@piefed.world

- !OtomeGames@ani.social

- !Shmups@lemmus.org

- !space_games@piefed.world

- !strategy_games@piefed.world

- !turnbasedstrategy@piefed.world

- !tycoon@lemmy.world

- !VisualNovels@ani.social

By games

- !Baldurs_Gate_3@lemmy.world

- !Cities_Skylines@lemmy.world

- !CassetteBeasts@Lemmy.world

- !Fallout@lemmy.world

- !FinalFantasyXIV@lemmy.world

- !Minecraft@Lemmy.world

- !NoMansSky@lemmy.world

- !Palia@Lemmy.world

- !Pokemon@lemm.ee

- !Silksong@indie-ver.se

- !Skyrim@lemmy.world

- !StardewValley@lemm.ee

- !Subnautica2@Lemmy.world

- !WorkersAndResources@lemmy.world

Language specific

- !JeuxVideo@jlai.lu: French

hmm not sure if that would work as the model that he was using would be different from what's available so he'd probably notice some differences which might cause a mix of uncanny valley and surrealism/suspension of disbelief where the two are noticably not the same

plus using a chat-only model would be real tragic as it's a significant downgrade from what they already had

his story actually feels like a Romeo and Juliet situation

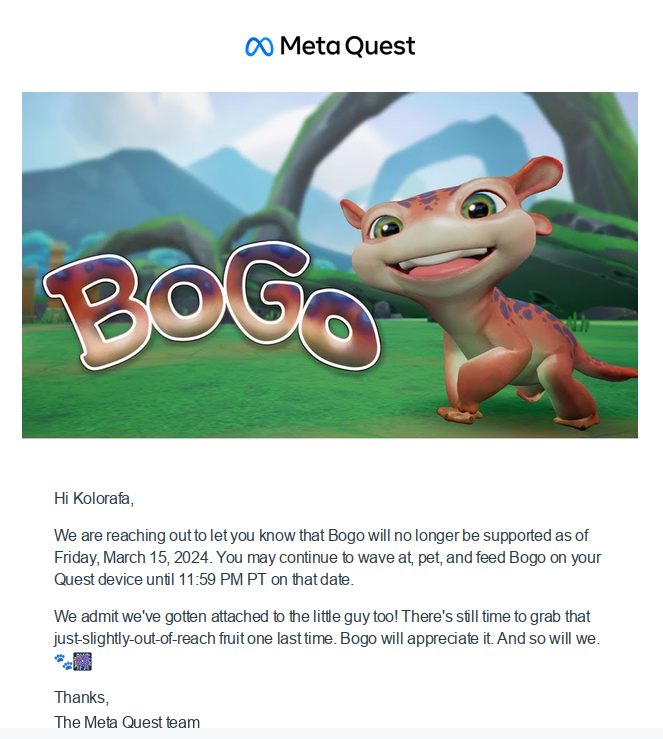

Doesn't even take a change of service provider to get there.

Replika had what had very obviously become a virtual mate service too, until they decided "love" wasn't part of their system anymore. Probably because it looked bad for investors, as happened for a lot of AI-based services people used for smut.

So a bunch of lonely people had their "virtual companion" suddenly lobotomized, and there's nothing they could do about it.

I always thought replika was a sex chatbot? Is/was it "more" than that?

It's... complicated.

At first the idea was it'd be training an actual "replica" of yourself, that could reflect your own personality. Then when they realized their was a demand for companionship they converted it into virtual friend. Then of course there was a demand for "more than friends", and yeah, they made it possible to create a custom mate for a while.

Then suddenly it became a problem for them to be seen as a light porn generator. Probably because investors don't want to touch that, or maybe because of a terms of servce change with their AI service provider.

At that point they started to censor lewd interactions and pretend replika was never supposed to be more than a friendly bot you can talk to. Which is, depending on how you interpret what services they proposed and how they advertized them until then, kind of a blatant lie.

LLM is capable of role-playing, character.ai for example can get into the role of any character after being trained. The sound is just text-to-speech, character.ai already includes that, though if a realistic voice is desired, it would need to be generated by a more sophisticated method, which is already being done. Example: Neuro-sama, ElevenLabs