995

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

this post was submitted on 07 Oct 2023

995 points (97.7% liked)

Technology

85169 readers

904 users here now

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related news or articles.

- Be excellent to each other!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, this includes using AI responses and summaries. To ask if your bot can be added please contact a mod.

- Check for duplicates before posting, duplicates may be removed

- Accounts 7 days and younger will have their posts automatically removed.

Approved Bots

founded 3 years ago

MODERATORS

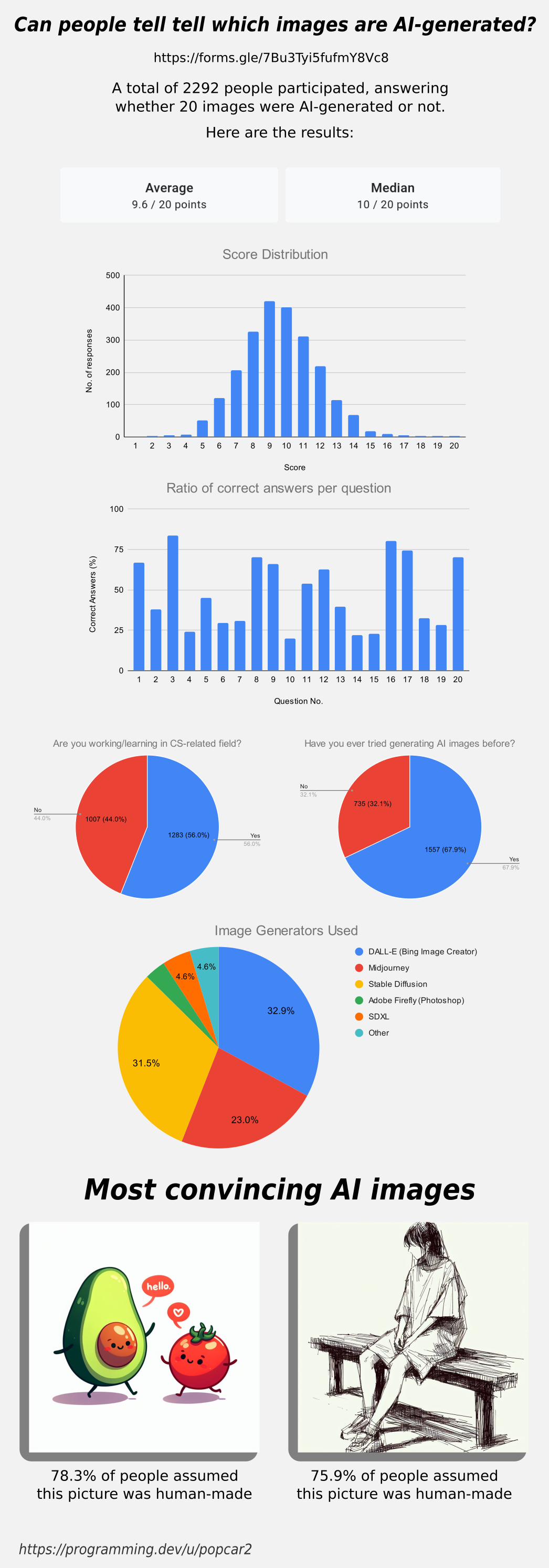

Having used stable diffusion quite a bit, I suspect the data set here is using only the most difficult to distinguish photos. Most results are nowhere near as convincing as these. Notice the lack of hands. Still, this establishes that AI is capable of creating art that most people can't tell apart from human made art, albeit with some trial and error and a lot of duds.

Idk if I'd agree that cherry picking images has any negative impact on the validity of the results - when people are creating an AI generated image, particularly if they intend to deceive, they'll keep generating images until they get one that's convincing

At least when I use SD, I generally generate 3-5 images for each prompt, often regenerating several times with small tweaks to the prompt until I get something I'm satisfied with.

Whether or not humans can recognize the worst efforts of these AI image generators is more or less irrelevant, because only the laziest deceivers will be using the really obviously wonky images, rather than cherry picking

AI is only good at a subset of all possible images. If you have images with multiple people, real world products, text, hands interacting with stuff, unusual posing, etc. it becomes far more likely that artifacts slip in, often times huge ones that are very easy to spot. For example even DALLE-3 can't generate a realistic looking N64. It will generate something that looks very N64'ish and gets the overall shape right, but is wrong in all the little details, the logo is distorted, the ports have the wrong shape, etc.

If you spend a lot of time inpainting and manually adjusting things, you can get rid of some of the artifacts, but at that point you aren't really AI generating images anymore, but just using AI as source for photoshopping. If you just using AI and pick the best images, you will end up with a collection of images that all look very AI'ish, since they will all feature very similar framing, posing, layout, etc. Even so no individual image might not look suspicious by themselves, when you have a large number of them they always end up looking very similar, as they don't have the diversity that human made images have and don't have the temporal consistency.

These images were fun, but we can't draw any conclusions from it. They were clearly chosen to be hard to distinguish. It's like picking 20 images of androgynous looking people and then asking everyone to identify them as women or men. The fact that success rate will be near 50% says nothing about the general skill of identifying gender.