100% of the time, baby =)

If you scaled it based on the size of the integer you could get that up to 99.9% test accuracy. Like if it's less than 10 give it 50% odds of returning false, if it's under 50 give it 10% odds, otherwise return false.

That would make it less accurate. It's much more likely to return true on not a prime than a prime

Correct. Not are why people are upvoting. If 10% of numbers are prime in a range, and you always guess false, you get 90% right. If you randomly guess true 10% of the time, you get ~80% right.

More random means more towards 50% correctness.

And 2,3,5,7 are primes of the first numbers, making always false 60% correct and random chance 50%

Code proof or it didn't happen.

Extra credit for doing it in Ruby

Makes me wonder where the actual break even would be. Like how long dies making one random number take versus sins lookups. Fuck it, do it in parallel. Fastest wins.

Now you're thinking with ~~portals~~ primes!

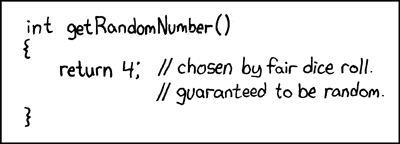

Reminds me of this xkcd:

info

View the source to see how I embedded the picture without copying it. The hover text had to be copied though.

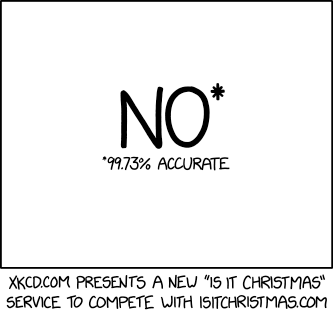

More like: https://xkcd.com/2236/

info

View the source to see how I embedded the picture without copying it. The hover text had to be copied though.

Has the same vibes as anthropic creating a C compiler which passes 99% of compiler tests.

That last percent is really important. At least that last percent are some really specific edge cases right?

Description:

When compiling the following code with CCC using -std=c23:bool is_even(int number) { return number % 2 == 0; }the compiler fails to compile due to

bool,true, andfalsebeing unrecognized. The same code compiles correctly with GCC and Clang in C23 mode.

Well fuck.

If this wasn't 100% vibe coded, it would be pretty cool.

A c compiler written in rust, with a lot of basics supported, an automated test suite that compiles well known c projects. Sounds like a fun project or academic work.

any llm must have several C compilers in its training data, so it would be a reasonably competent almost-clone of gcc/clang/msvc anyway, right?

is what i would have said if you didn't put that last part

You're still correct. The thing about LLMs is that they're statistical models that output one of the most likely responses, from the list of most likely responses. It still has some randomness though. You can tune this, but no randomness is shit, and too much randomness sometimes generates stupid garbage. With a large enough output, you're statistically likely, with any randomness, to generate some garbage. A compiler is sufficiently large and complex that it's going to end up creating garbage somewhere, even if it's trained on these compilers specifically.

that's a great point but wouldn't the output for a solved problem like "make a working C compiler in rust" work better if the temperature/randomness were zero? or am i fundamentally misunderstanding?

Probably. At that point you might as well just copy/paste the existing compiler though. The temperature is basically the thing that makes it seem intelligent, because it gives different responses each time, so it seems like it's thinking. But yeah, having it just always give the most likely response would probably be better, but also probably wouldn't play well with copyright laws when you have the exact same code as an existing compiler.

This is so fucked up. The AI company has the perfect answer and yet it rolls the die to recreate the same thing by chance. What are they expecting, really?

they don't care. they expect to be able to say "our AI agent made a C compiler that passed 99% of our tests"

My favorite part of this is that they test it up to 99999 and we see that it fails for 99991, so that means somewhere in the test they actually implemented a properly working function.

No, it's always guessing false and 99991 is prime so it isn't right. This isn't the output of the program but the output of the program compared with a better (but probably not faster) isprime program

Yes, that's what I said. They wrote another test program, with a correct implementation of IsPrime in order to test to make sure the pictured one produced the expected output.

Plot twist: the test just checks to see if the input exists in a hardcoded list of all prime numbers under 100000.

I mean people underestimate how usefull lookup tables are. A lookup table of primes for example is basically always just better except the one case where you are searching for primes which is more maths than computer programming anyways. The modern way is to abstract and reimplement everything when there are much cheaper and easier ways of doing it.

more maths than computer programming anyways

Computer programming is a subset of maths and was invented by a mathematition, originally to solve a maths problem...

Yeah but they slowly develop to be their own fields. You wouldnt argue that physics is math either. Or that chemistry could technically be called a very far branch of philosophy. Computer programing, physics, etc are the applied versions of math. You are no longer studying math, you are studying something else with the help of math. Not that it matters much, just makes distinguising between them easier. You can draw the line anywhere but people do generally have a somewhat shared idea of where that lies.

That's a legitimate thing to do if you have a slow implementation that's easy to verify and a fast implementation that isn't.

Pssh, mine uses a random number generator for odd numbers to return true 4% of the time to achieve higher accuracy and a bettor LLM metaphor

The error is ~1/log(x), for anyone interested.

you can increase its accuracy by changing the parameter type to long

LLMs belong to the same category. Seemingly right, but not really right.

I'm struggling to follow the code here. I'm guessing it's C++ (which I'm very unfamiliar with)

bool is_prime(int x) {

return false;

}

Wouldn't this just always return false regardless of x (which I presume is half the joke)? Why is it that when it's tested up to 99999, it has a roughly 95% success rate then?

I suppose because about 5% of numbers are actually prime numbers, so false is not the output an algorithm checking for prime numbers should return

Oh I'm with you, the tests are precalculated and expect a true to return on something like 99991, this function as expected returns false, which throws the test into a fail.

Thank you for that explanation

And the natural distribution of primes gets smaller as integer length increases

That's the joke. Stochastic means probabilistic. And this "algorithm" gives the correct answer for the vast majority of inputs

Because only 5% of those numbers are prime

I have seen that algorithm before. It's also the implementation of an is_gay(Image i) algorithm with around 90% accuracy.

If you think this is bad and not nearly enough accuracy to be called correct, AI is much worse than this.

It's not just wrong a lot of times or hallucinates but you can't pinpoint why or how it produces the result and if you keep putting the same data in, the output may still vary.

Programmer Humor

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics