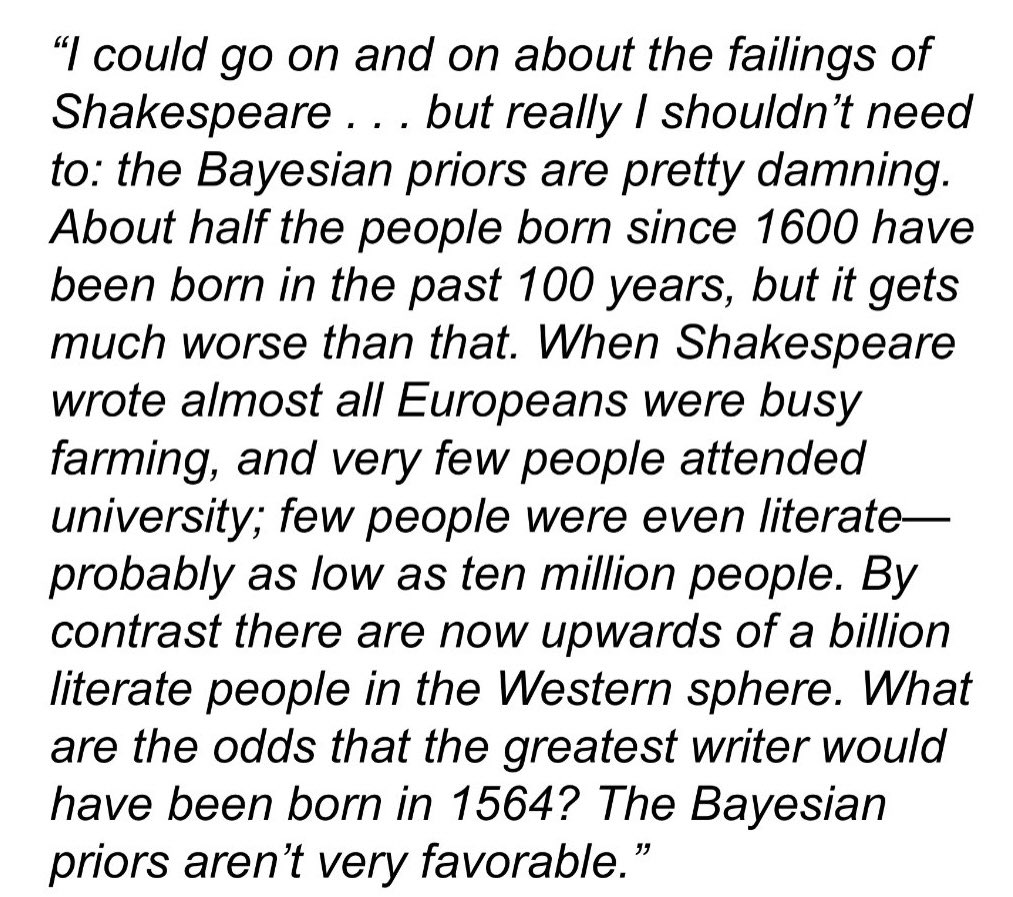

rootclaim appears to be yet another group of people who, having stumbled upon the idea of the Bayes rule as a good enough alternative to critical thinking, decided to try their luck in becoming a Serious and Important Arbiter of Truth in a Post-Mainstream-Journalism World.

This includes a randiesque challenge that they'll take a $100K bet that you can't prove them wrong on a select group of topics they've done deep dives on, like if the 2020 election was stolen (91% nay) or if covid was man-made and leaked from a lab (89% yay).

Also their methodology yields results like 95% certainty on Usain Bolt never having used PEDs, so it's not entirely surprising that the first person to take their challenge appears to have wiped the floor with them.

Don't worry though, they have taken the results of the debate to heart and according to their postmortem blogpost they learned many important lessons, like how they need to (checks notes) gameplan against the rules of the debate better? What a way to spend 100K... Maybe once you've reached a conclusion using the Sacred Method changing your mind becomes difficult.

I've included the novel-length judges opinions in the links below, where a cursory look indicates they are notably less charitable towards rootclaim's views than their postmortem indicates, pointing at stuff like logical inconsistencies and the inclusion of data that on closer look appear basically irrelevant to the thing they are trying to model probabilities for.

There's also like 18 hours of video of the debate if anyone wants to really get into it, but I'll tap out here.

ssc reddit thread

quantian's short writeup on the birdsite, will post screens in comments

pdf of judge's opinion that isn't quite book length, 27 pages, judge is a microbiologist and immunologist PhD

pdf of other judge's opinion that's 87 pages, judge is an applied mathematician PhD with a background in mathematical virology -- despite the length this is better organized and generally way more readable, if you can spare the time.

rootclaim's post mortem blogpost, includes more links to debate material and judge's opinions.

edit: added additional details to the pdf descriptions.

Maybe he thinks employing a weirdo facade makes people lower their guard, taking a page from Jimmy Saville.

Isn't the ability to manipulate people a defining trait of intelligence according to rationalists?