fuck man, this was bad enough that people outside the sneerverse were talking about this around me irl

smh they really do be out here believing there's a little man in the machine with goals and desires, common L for these folks

Thank you. My wife is deathly allergic to shrimp, and I live by the motto

'If they send one of your loved ones to the emergency room, you send 10 of theirs to the deep fryer. '

Shared this on tamer social media site and a friend commented:

"That's nonsense. The largest charities in the country are Feeding America, Good 360, St. Jude's Children's Research Hospital, United Way, Direct Relief, Salvation Army, Habitat for Humanity etc. etc. Now these may not satisfy the EA criteria of absolutely maximizing bang for the buck, but they are certainly mostly doing worthwhile things, as anyone counts that. Just the top 12 on this list amount to more than the total arts giving. The top arts organization on this list is #58, the Metropolitan Museum, with an income of $347M."

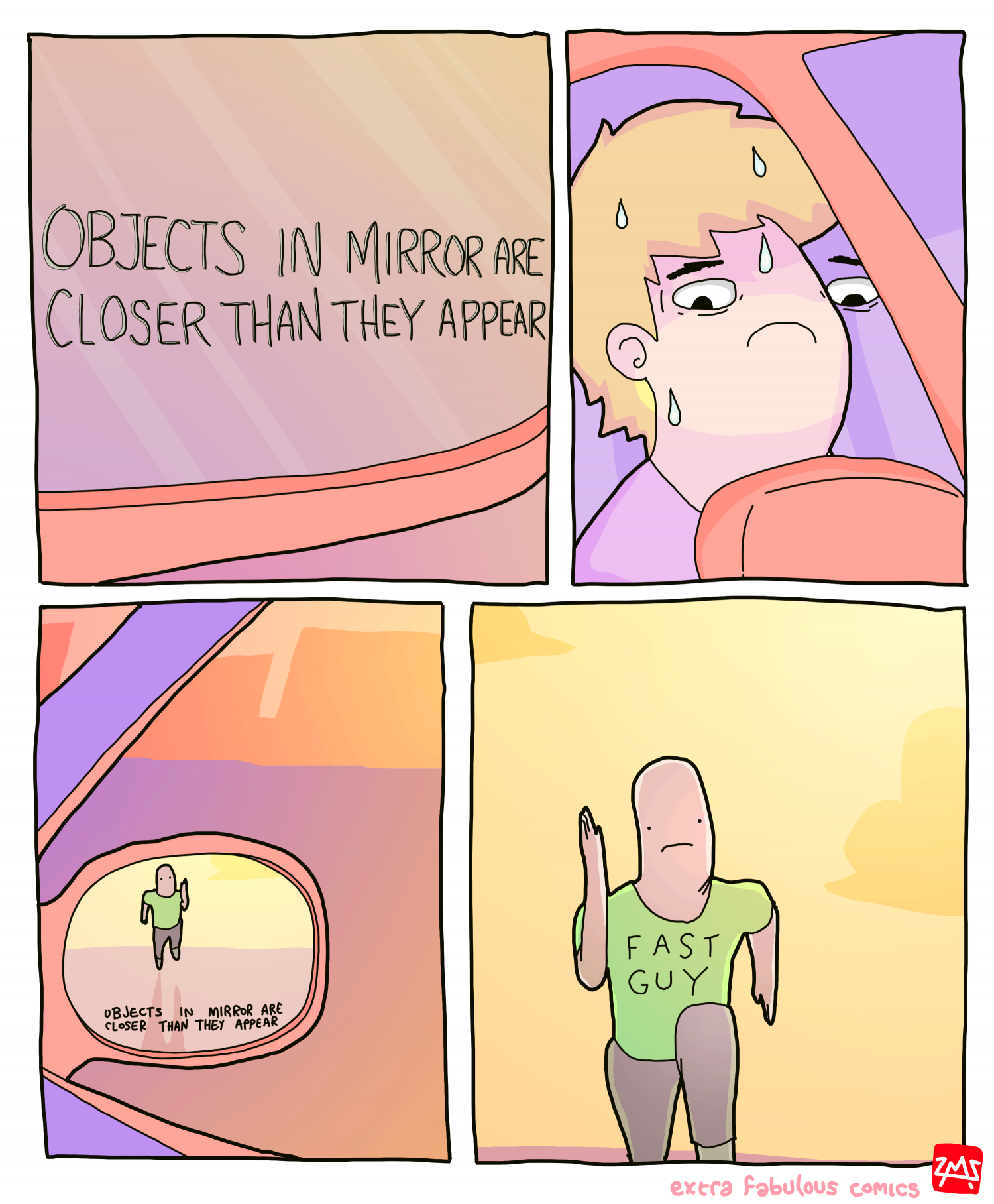

A nice exponential curve depicting the infinite future lives saved by whacking a ceo

lmaou bruv, great to know these clowns are both coping & seething

my b lads, I corrected it

And the number of angels that can dance on the head of a pin? 9/11

Unbelievably gross 🤢 I can't even begin to imagine what kind of lunatic would treat their loved one's worth as 'just a number' or commodity to be exchanged. Frightening to think these are the folks trying to influence govt officials.

Unclear to me what Daniel actually did as a 'researcher' besides draw a curve going up on a chalkboard (true story, the one interaction I had with LeCun was showing him Daniel's LW acct that is just singularity posting and Yann thought it was big funny). I admit, I am guilty of engineer gatekeeping posting here, but I always read Danny boy as a guy they hired to give lip service to the whole "we are taking safety very seriously, so we hired LW philosophers" and then after Sam did the uno reverse coup, he dropped all pretense of giving a shit/ funding their fan fac circles.

Ex-OAI "governance" researcher just means they couldn't forecast that they were the marks all along. This is my belief, unless he reveals that he superforecasted altman would coup and sideline him in 1998. Someone please correct me if I'm wrong, and they have evidence that Daniel actually understands how computers work.