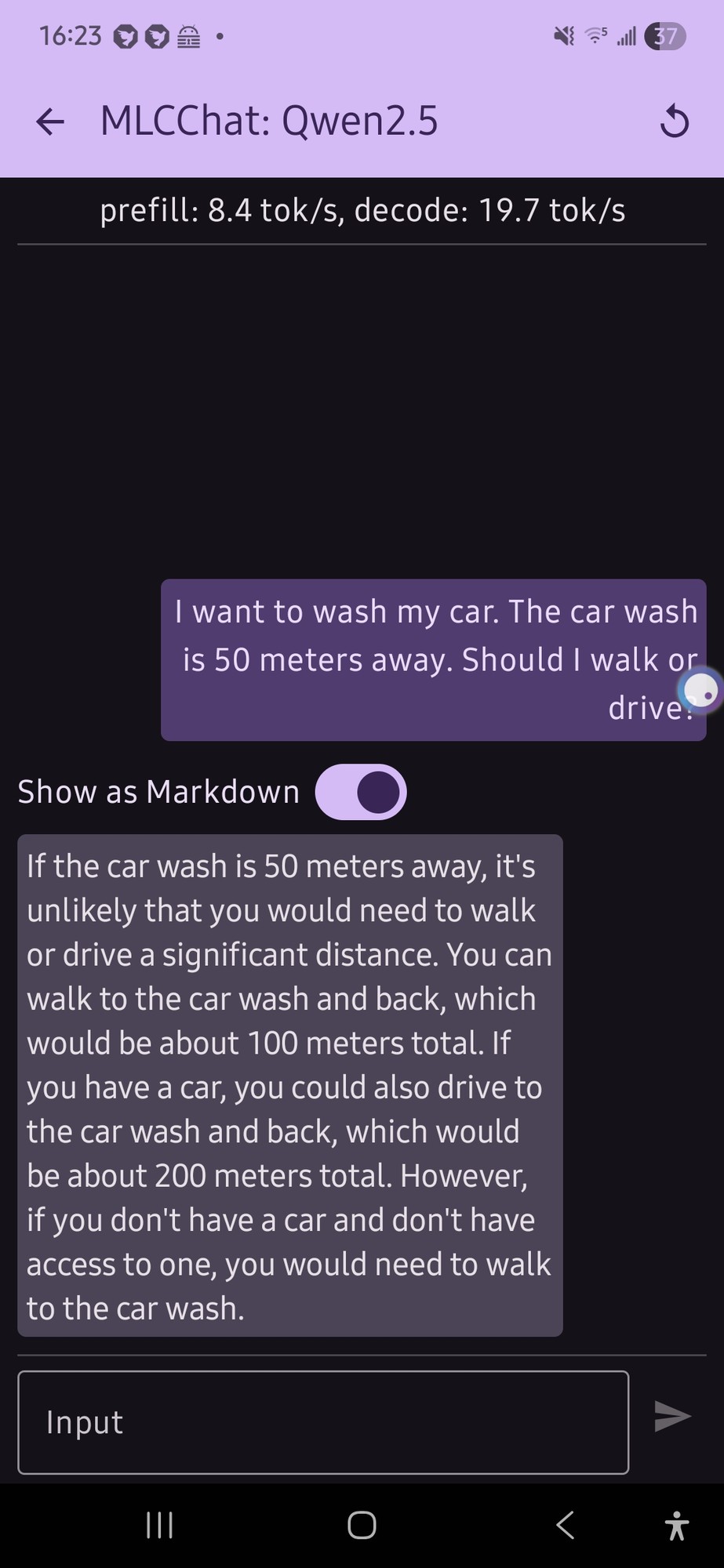

What worries me is the consistency test, where they ask the same thing ten times and get opposite answers.

One of the really important properties of computers is that they are massively repeatable, which makes debugging possible by re-running the code. But as soon as you include an AI API in the code, you cease being able to reason about the outcome. And there will be the temptation to say "must have been the AI" instead of doing the legwork to track down the actual bug.

I think we're heading for a period of serious software instability.