Let's just hope XFCE can finish the transition before then. If not, I am not looking forward to having to shop for a new DE.

MariaDB for the win!

I think B’Elanna’s not sure what to think of her “Barge of the Dead” experience and can’t tell if it was real or just some weird hallucination.

“I hope so” indicates that uncertainty.

I somewhat agree. I don't hate Chakotay as a character. I guess what I mostly am complaining about are the faux-Native American lore ones where they failed spectacularly at representation.

Additionally, I think 3.18 onward doesn't even support theming engines. As said, though, GIMP is stuck on GTK2.

If you're having a lot of trouble, perhaps just go with the Flatpak.

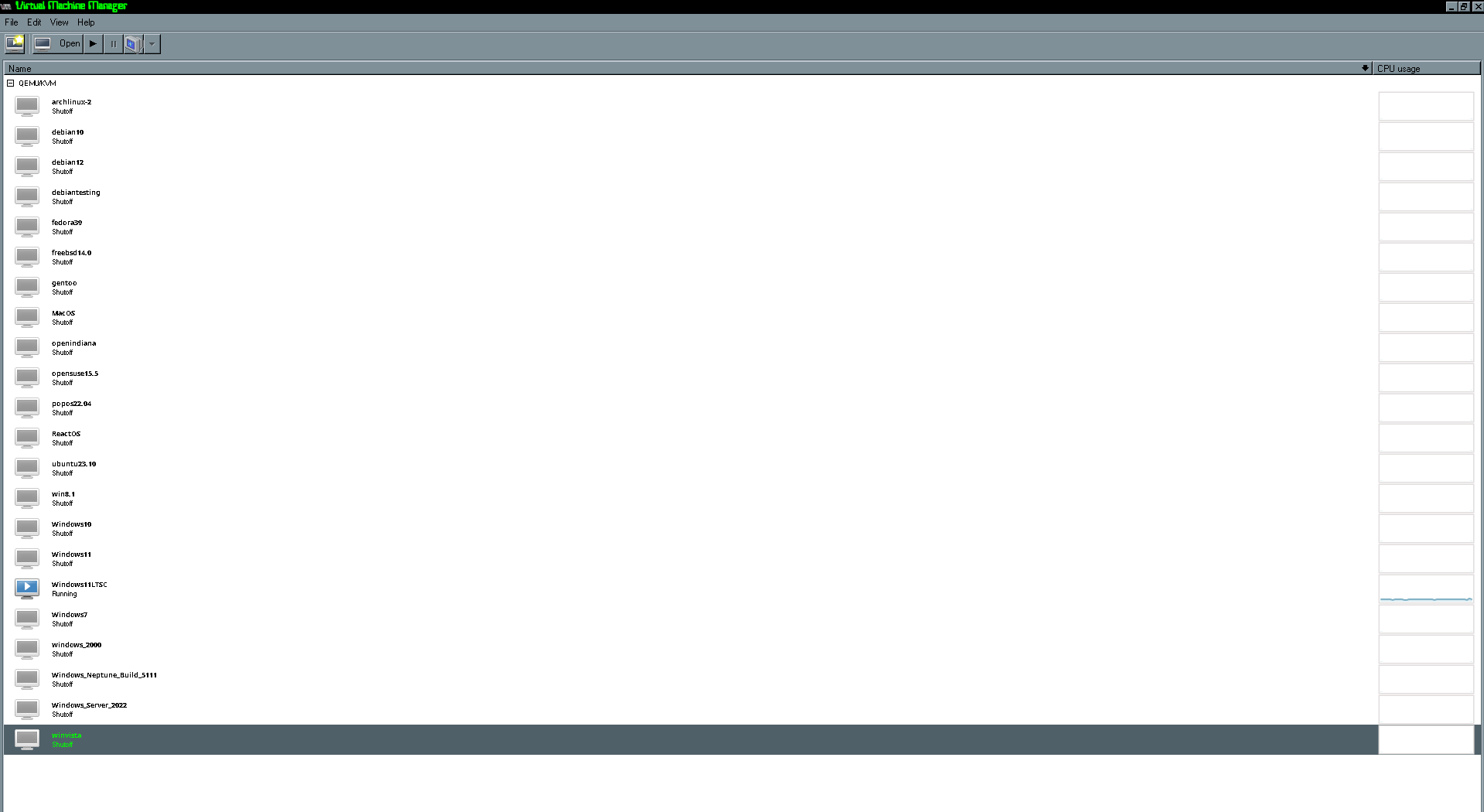

Could it be a Secure Boot issue? From what I can tell, this is roughly a late Windows 8.1 era machine, and I think Microsoft already required OEMs to have Secure Boot around this time; I have a 6th gen Intel laptop (don't know about 5th gen, which I think this laptop has) with TPM 1.2. Lots of laptops are big jerks about this, and sometimes you have to disable it at least until you allow non-Microsoft Keys.

Also, can you change the title of your post? I feel like it doesn't convey what you're actually asking and sort of scares people away from wanting to respond to you. Maybe something more like "Tablet Boots to Black Screen After Attempted Debian Install?"

If it can get non-destructive editing by when 3.2 comes out say… 2030, I’ll be happy.

Apparently, it's not on Disney+ due to licensing crap. The only way is to be a stereotypical Orion. Aaaaaarrrr! Woops. Sorry. The pheromones are really bad this time of year.

I always thought people like Kang basically just got plastic surgery once they had sufficient influence to be able to afford it.

My other thought is that most of the components of ridges are a dominant genetic trait (kind of like how the Klingon ridges were prominent in Romulan-Klingon hybrids or B'Elonna), and that through a gradual process the virus-affected Klingons interbred with unaffected Klingons until ridges returned.

As for DIS Klingons, I have several thoughts. Since the Klingons go back to normal by SNW, it could be possible that they just decided to retcon the DIS style away all together. Another weird thought is that they could be some sort of genetic glitch encountered with augment-normal hybrid Klingons where they got the ridges but their melanin's all wonky, resulting baldness and either albinism or an "anti-albinism". Once again, the interbreeding meant that eventually, these traits disappeared.

To explain why we tend to see the same type of Klingon together, there could be social biases to keep oneself surrounded by the same type of Klingon; this maybe slows interbreeding enough that communities of several types of Klingons still existed by the 23rd century, but had largely merged back into the normal-ish Klingon by the 24th.

Building a custom Buildroot Linux for a Pentium 2 laptop that can fit on a CD so I could back up a 2.5" IDE drive to a USB drive, probably.

On another note, last night, I had to get a Google TV set up on my dorm Wi-Fi, which requires me to either go through a portal to set it up or to go into my account and add the device's MAC address. The TV (which was brand new and doing OOBE stuff) wouldn't let me go to settings to get the MAC address without a network connection. Even more infuriating, there was a button in the Google Home app that said "Show MAC address", but when I pushed it, it would say "Can't get MAC address." What I ended up doing to get around that crap was setting up my Debian Thinkpad (which I am writing from now) to share its internet connection over ethernet to finish the setup process so I could get to settings and get the MAC address.

On one hand, a funny experience, but on the other hand, I'm simultaneously both mad at Google and my dorm internet provider.

AMD unless you’re actually running AI/ML applications that need a GPU. AMD is easier than NVidia on Linux in every way except for ML and video encoding (although I’m on a Polaris card that lost ROCm support [which I’m bitter about] and I think AMD cards have added a few video codecs since). In GPU compute, Nvidia holds a near-dictatorship, one which I don’t necessarily want to engage in. I haven’t ever used an Intel card, but I’ve heard it seems okay. Annecdotally, graphics support is usable but still improving for gaming. Although its AI ecosystem is immature, I think Intel provides special Tensorflow/Torch modules or something, so with a bit of hacking (but likely less than AMD) you might be able to finagle some stuff into running. Where I’ve heard these shine, though, is in video encoding/decoding. I’ve heard of people buying even the cheapest card and having a blast in FFMPEG.

Truth be told, I don’t mess with ML a lot, but Google Colab provides free GPU-accelerated Linux instances with Nvidia cards, so you could probably just go AMD or Intel and get best usability while just doing AI in Colab.

No.