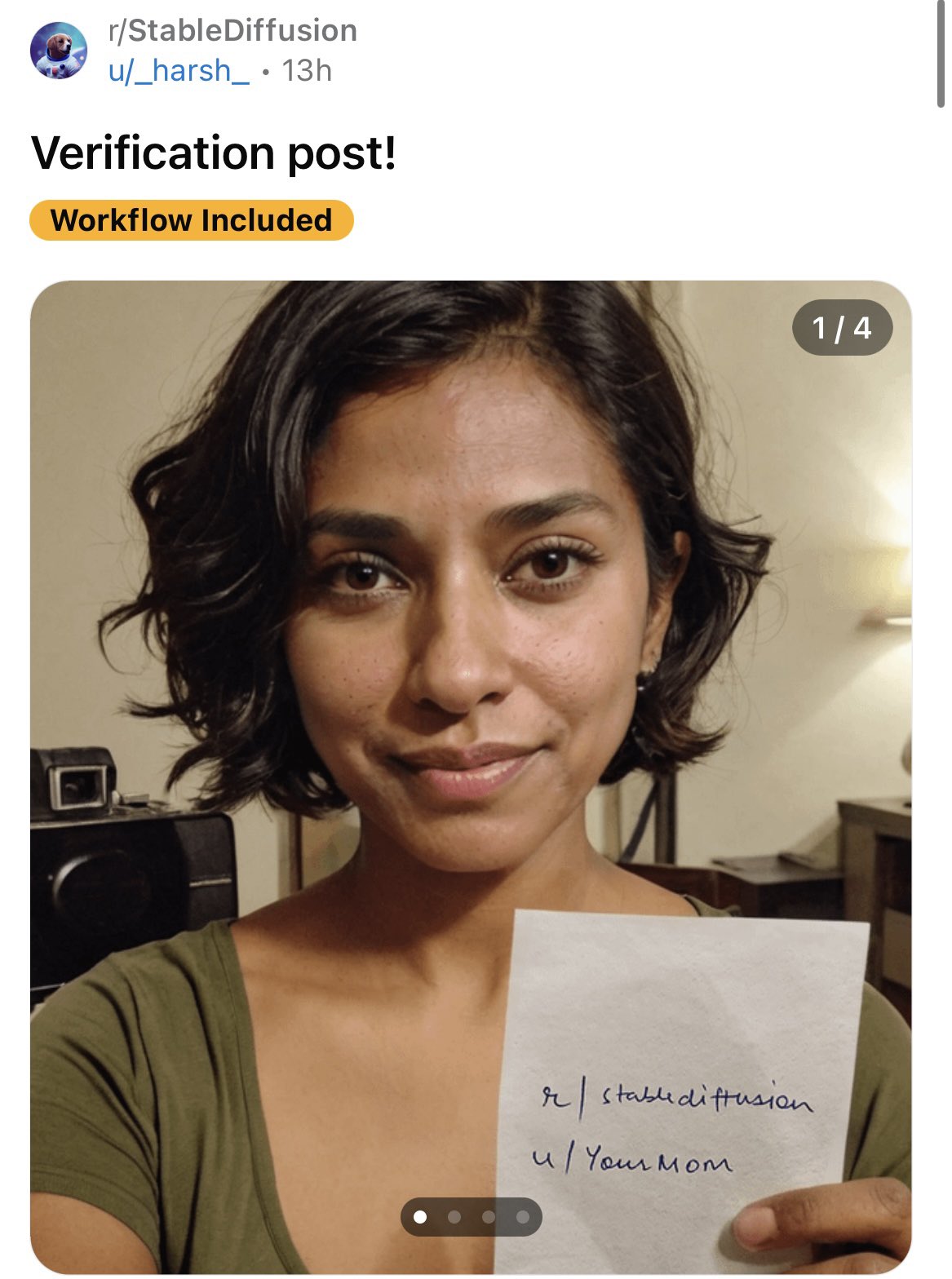

I never understood how they were useful in the first place. But that's kind of beside the point. I assume this is referencing AI, but due to the fact that you've only posted one photo out of apparently four, I don't really have any idea what you're posting about.

The point of verification photos is to ensure that nsfw subreddits are only posting with consent. Many posts were just random nudes someone found, in which the subject was not ok with having them posted.

The verification photos show an intention to upload to the sub. A former partner wanting to upload revenge porn would not have access to a verification photo. They often require the paper be crumpled to make it infeasible to photoshop.

If an AI can generate a photorealistic verification picture, it cannot be used to verify anything.

I didn't realize they originated with verifying nsfw content. I'd only ever seen them in otherwise text-based contexts. It seemed to me the person in the photo didn't necessarily represent the account owner just because they were holding up a piece of paper showing the username. But if you're matching the verification against other photos, that makes more sense.

It's been used way before the nsfw stuff and the advent of AI.

Back in the days if you were doing an AMA with a celeb, the picture proof is the celeb telling us this is the account they are using. Doesn't need to be their account and was only useful for people with an identifiable face. If you were doing an AMA because you were some specialist or professional, giving your face and username doesn't do anything, you need to provide paperwork to the mods.

This is a poor way to police fake nudes though, I wouldn't have trusted it even before AI.

Was it really that hard to Photoshop enough to bypass mods that are not experts at photo forensic?

Probably not, but it would still reduce the amount considerably.

I think it takes a considerable amount of work to photoshop something written on a sheet of paper that has been crumpled up and flattened back out.

It's mostly about filtering the low-hanging fruit, aka the low effort trolls, repost bots, and random idiots posting revenge porn.

Due to having so many people trying to impersonate me on the internet, I've become somewhat of a expert on verification pictures.

You can still easily tell that this is fake because if you look closely, the details, especially the background clutter, is utterly nonsensical.

- The object over her right shoulder (your left), for example, looks like if someone blended a webcam with a TV with a nightstand.

- Over her left shoulder (your right), her chair is only on that one side and it blends into the counter in the background.

- Is it a table lamp or a wall mounted light?

- The doorframe in background behind her head is not even aligned.

- Her clavicles are asymmetrical, never seen that on a real person.

- Her wispy hairstrands. Real hair don't appear out of thin air in loops.

The point isn't that you can spot it.

The point is that the automated system can't spot it.

Or are you telling me there is a person looking at every verification photo, and if they did they would thoroughly scan the photo for imperfections?

The idea of using a picture upload for automated verification is completely unviable. A much more commonly used system would be something like telling you to perform a random gesture on camera on the spot, like "turn your head slowly" or "open your mouth slowly" which would be trivial for a human to perform but near impossible for AI generators.

but near impossible for AI generators.

...I feel like this isn't the first time I heard that statement before.

Margot Robbie

Due to having so many people trying to impersonate me on the internet

Uh huh.

That's esteemed Academy Award nominated verification picture expert/character actress Margot Robbie to you!

Now watch me win my Golden Globe tonight. (Still no best actress... sigh)

Her clavicles are asymmetrical, never seen that on a real person.

Shit, are you telling me that every time I see myself in the mirror I'm actually looking at a string of AI generated images, generated in real-time? The matrix is real. 😱

It's either that, or my clavicles are actually very asymmetric. ☹️

Due to so many people trying to impersonate me on the Internet

Yeah see, now I am not really sure if you're the real Margot Robbie.

Could you send me a verification picture?

I’m pretty sure we can just switch to a verification video chat which will buy us a year.

At some point the only way to verify someone will be to do what the Klingons did to rule out changelings: Cut them and see if they bleed.

Don't worry, companies like 23andMe and Ancestry have been banking DNA records, so mimicking blood won't be too hard, either.

Can confirm, I made some random korean dude on dall-e to send to Instagram after it threatened to close my fake account, and it passed.

Once again everyone on the internet is a cute girl if they want to be.

Or a cute cat.

Or Elvis.

What am I looking at here?

GenAI made image of a verification post. The point i guess is that with genAI photos, anyone can easily make a fake verification post, making them less useful as a means to verify identity.

The post originally is from reddit (https://www.reddit.com/r/StableDiffusion/s/fEle6uaiR7)

Very rapidly the basis of truth in any discussion is going to get eroded.

They were always useless

Isn't there a trick where you can ask someone to do a specific hand gesture to get photos verified. That'll still work especially because AI makes fingers look wonky

AI has been able to do fingers for months now. It's moving very rapidly so it's hard to keep up. It doesn't do them perfectly 100% of the time, but that doesn't matter since you can just regenerate it until it gets it right.

"Can you hold up 7 fingers in front of the camera?"

Photo with one hand up

That’s why you need a video with movement. AI still can’t do video right.

It's getting close, now you can provide a picture of someone and an animated skeleton, and it outputs the person moving according to the reference.

Where do I get an animated skeleton?

Home Depot sells them around October

My discord friends had some easy ways to defeat this.

You could require multiple photos; it's pretty hard to get AI to consistently generate photos that are 100% perfect. There would bound to be things wrong with trying to get AI to generate multiple photos of the same (non-celeb) person that would make it obvious it's fake.

Another idea was to make it a short video instead of a still photo. For now, at least, AI absolutely sucks balls at making video.

Never trust your eyes or ears again in this modern digital hellscape! https://youtube.com/shorts/55hr7Tx_7So?si=db5hROJWYjdQRMTD

AI pictures are like reverse uncanny-valley: They feel right, but you will shit brix upon further inspection.

A Boring Dystopia

Pictures, Videos, Articles showing just how boring it is to live in a dystopic society, or with signs of a dystopic society.

Rules (Subject to Change)

--Be a Decent Human Being

--Posting news articles: include the source name and exact title from article in your post title

--If a picture is just a screenshot of an article, link the article

--If a video's content isn't clear from title, write a short summary so people know what it's about.

--Posts must have something to do with the topic

--Zero tolerance for Racism/Sexism/Ableism/etc.

--No NSFW content

--Abide by the rules of lemmy.world