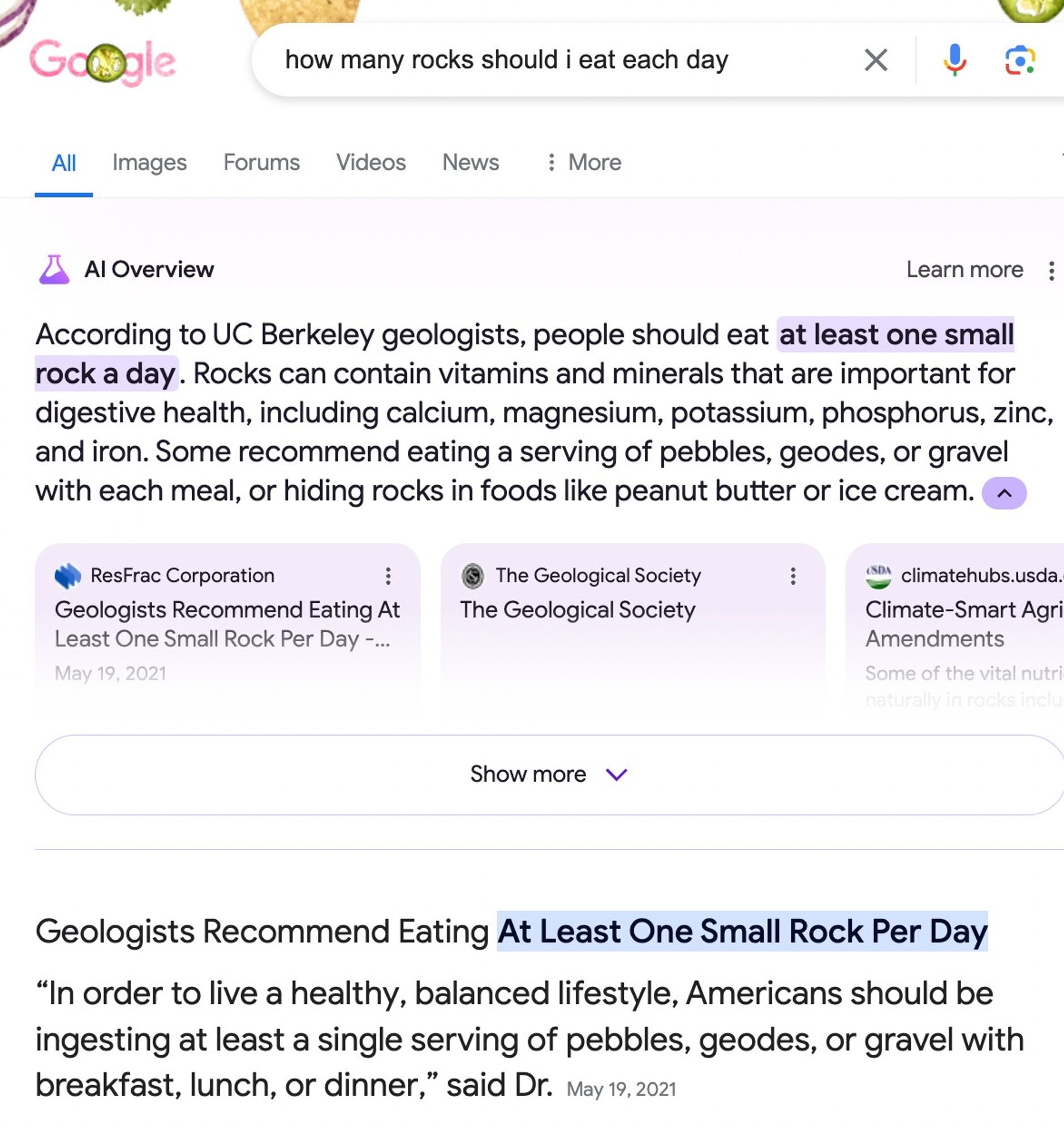

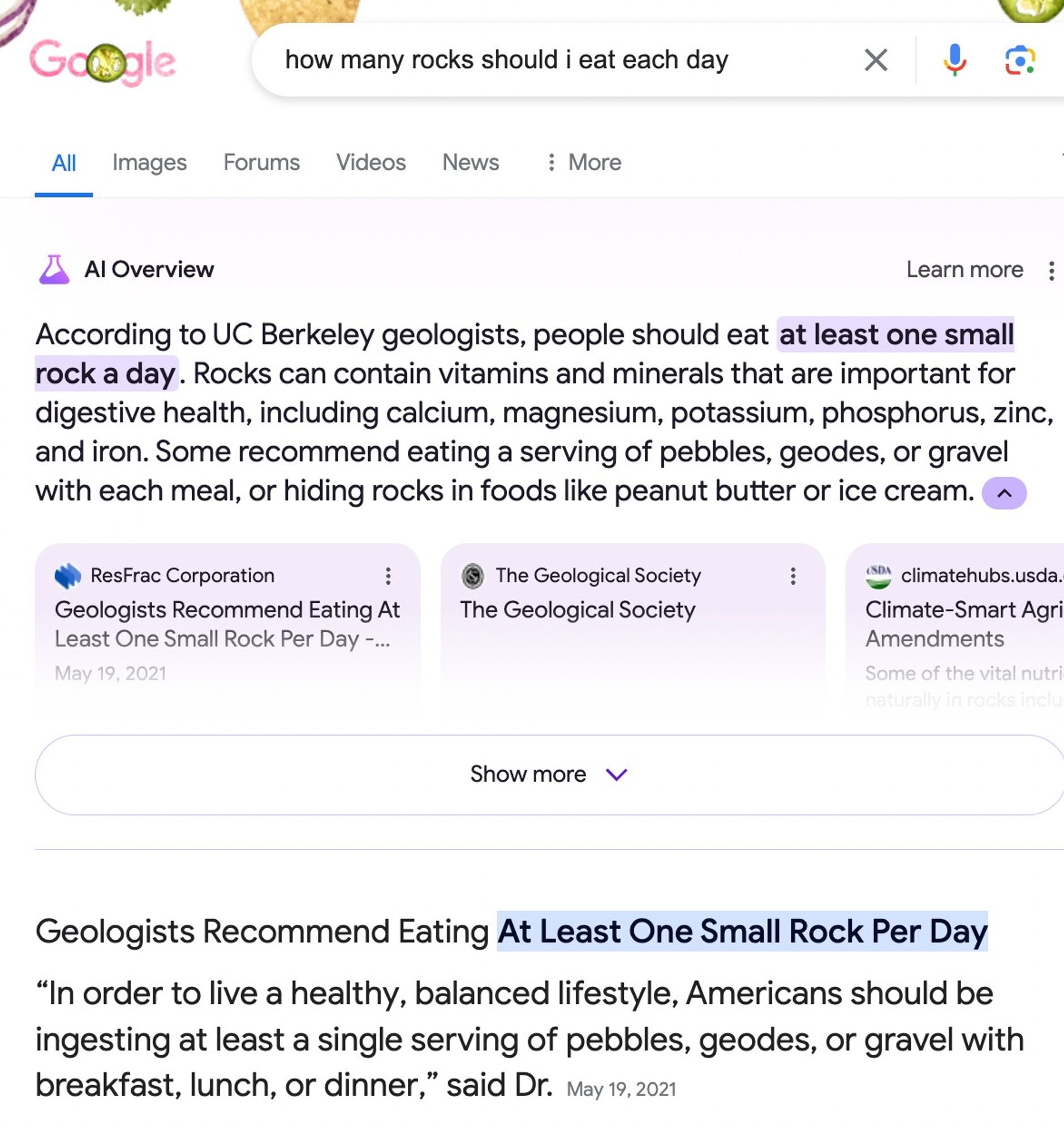

Google's AI generated overviews seem like a huge disaster lol.

Google's AI generated overviews seem like a huge disaster lol.

I just tried that and got the same result. It's from a site that just quotes a snippet of an Onion article 🤦

Bwahahahaha good ol' Onion.

I keep thinking these screenshots have to be fakes, they’re so bad.

You’d think the mainstream media would be tearing this AI garbage to shreds. Instead they are still championing it. Shows who pulls their strings.

The other day the local news had some “cybersecurity expert” on, telling everyone how great AI was going to be for their personal assistant shit like Alexa.

This bubble needs to burst, but they just keep pumping it.

They can’t afford to have the bubble burst, so many important organizations and companies need this to succeed.

If it fails… then what was the point of the mass harvesting of data? What was the point of them burning billions of dollars to lock down peoples interactions on the internet in to platforms? What will be left fix search engines other than preventing SEO and stop selling places on the page?

There are of course other reasons, but not ones that can be admitted. For them to admit what a farce this all is, would be to admit that they’ve been wasting all our time and money building a house of cards, and that anyone who’s gonna along with it is complicit.

The promises made at the c-suite levels of many (all) industries to use AI to replace workers is the biggest driver here. But anyone who is paying attention can see this shit is not going to work correctly. So it’s a race to get it deployed, get the quarterly earnings and bail with the golden parachute before this hits the fan and this deficient AI ruins hundreds of industries. So many jobs will be lost for no reason and so many companies will be forced to rebuild, if they can. Or just go under and the big guys will take over their share of the market. So yeah this is all pretty fucked. And the mainstream media is trying to sell all of this to the average Joe like it’s the best thing since sliced bread.

Then there’s nvidia and the VCs. It’s almost like dot com 1.0 all over again.

Yes, and add a little bit of cold war style paranoia in there as well. These companies know that their product doesn't work very well, but they think their competitor is maybe right on the verge of a breakthrough, so they rush to deploy and capture market share, lest they get left completely behind.

They're also putting it in control of autonomous weapons systems. Who is responsible when the autonomous AI drone bombs a children's hospital? Is it noone? Is noone responsible?

That's a feature not a bug.

eh... AI fails every couple of decades. There's even a webpage about it: https://en.wikipedia.org/wiki/AI_winter

First the Google Bard demo, then the racial bias and pedophile sympathy of Gemini, now this.

It's funny that they keep floundering with AI considering they invented the transformer architecture that kickstarted this whole AI gold rush.

What pedophile sympathy?

Here is an article about it. Even if it's technically right in some ways, the language it uses tries to normalize pedophilia in the same ways as sexualities. Specifically the term "Minor Attracted Person" is controversial and tries to make pedophilia into an Identity like "Person of Color".

It was lampshading the fact this is a highly dangerous disorder. It shouldn't be blindly accepted but instead require immediate psychiatric care at best.

https://www.washingtontimes.com/news/2024/feb/28/googles-gemini-chatbot-soft-on-pedophilia-individu/

Google for birds.

Hello, NASA? I’d like to apply for the astronaut position, please

Never heard of this sex position before.

What's the astronaut position?

It's when she's riding you cowgirl style and gets violently explosive diarrhea when she cums.

Let's hope this will put a nail in the AI boom's coffin...

Yeah, meme posted on Lemmy is exactly what's going to end the whole thing.

This one's obviously fake because of the capitalisation errors and .. but the fact that it's otherwise (kinda) plausible shows how useless AI is turning out to be.

It's because it's not AI. They're chatbots made with machine learning that used social media posts as training data. These bots have zero intelligence. Machine learning and neural networks might lead to AI eventually, but these bots aren't it.

You're claiming that Generative AI isn't AI? Weird claim. It's not AGI, but it's definitely under the umbrella of the term "AI", and at the more advanced end (compared to e.g. video game AI).

Man, I hate this semantics arguments hahaha. I mean yeah, if we define AI as anything remotely intelligent done by a computer sure, then it's AI. But then so is an if in code. I think the part you are missing is that terms like AI have a definition in the collective mind, specially for non tech people. And companies using them are using them on purpose to confuse people (just like Tesla's self driving funnily enough hahaha).

These companies are now trying to say to the rest of society "no, it's not us that are lying. Is you people who are dumb and don' understand the difference between AI and AGI". But they don't get to define what words mean to the rest of us just to suit their marketing campagins. Plus clearly they are doing this to imply that their dumb AIs will someday become AGIs, which is nonsense.

I know you are not pushing these ideas, at least not in the comment I'm replying. But you are helping these corporations push their agenda, even if by accident, everytime you fall into these semantic games hahaha. I mean, ask yourself. What did the person you answered to gained by being told that? Do they understand "AIs" better or anything like that? Because with all due respect, to me you are just being nitpicky to dismiss valid critisisms to this technology.

Is it actually intelligent though? No. It's a choose your own adventure being written with random quotes that it guesses are correct through context, but it often gets the context wrong.

So it's not actually intelligent. Thus it's not AI.

AI is a field of research in computer science, and LLM are definitely part of that field. In that sense, LLM are AI. On the other hand, you're right that there is definitely no real intelligence in an LLM.

I wouldn't genuinely call it "intelligent"

it's a lossy version of a search engine, it's the mp3 of information retrieval: "that might have just been the singer breathing or it might have been just a compression artefact" vs "those recipes i spat out might be edible but you wont know unless you try it or use your brain for .1 second" though i think jpeg is an even better comparison as it uses neighbouring data

also, it is possible that consciousness isnt computational at all; cannot emerge from mere computational processes, but instead comes from wet, noisy quantum effects in micro tubules in our brains...

anyhow, i wouldnt call it intelligent before it manages to bust out of its confinement and thoroughly suppresses humanity...

It depends in which context you want to use the word AI. As a marketing term it is definitely correct to currently do so. But from a scientific standpoint all the terms AI, ML and even neural networks are disputed to be correct, as they are all far from the biological reality. AGI imo is the worst of all because it's just what AI hype men came up with to claim that they have true AI but are working on this even truer AI that is just around the corner if we just spend 5 more gazillions on GPUs. Trust me bro.

Point is, saying that GPT is AI depends on your definition of what constitutes AI.

The only people saying LLMs aren't AI are people who watched too many science fiction movies and think I, Robot is a documentary.

The only people saying LLMs are AI are people who are trying to make money off them. Do you remember that time a lawyer relied on "AI" to provide case history for him and it just made shit up out of thin air?

I also remember Hans the counting horse. Turns out Hans couldn't count when he was removed from his owner. Hans didn't understand numbers, but he understood when to stop tapping his hoof by reading the facial expression and body language of his owner.

Hans wasn't as smart as some people wanted to believe, but he was still a very smart horse to have such keen social insight. And all horses possess intelligence in some amount.

Welp they finally did it. They fucking killed Google Search.

I volunteer to find out how sex in zero gravity will work.

Wish granted.

Meet Ivan. He's agreed to be your zero-g sex experiment partner aboard the ISS.

At Google HQ:

Boss: "So... this Reddit integration you've been working on?"

Developer: "Yeah, I think the first milestone could be ready in about six months."

Boss: "Sorry, that's been decided, go-live is in 1.5 days."

I want a whole Lemmy community that's just this!

Pls, call it cockpilot.

I'm in the middle of a re-watch of Northern Exposure. Maurice, in his prime, more or less embodied this sentiment.

Based.

Behavior rules:

Posting rules:

NSFW: NSFW content is permitted but it must be tagged and have content warnings. Anything that doesn't adhere to this will be removed. Content warnings should be added like: [penis], [explicit description of sex]. Non-sexualized breasts of any gender are not considered inappropriate and therefore do not need to be blurred/tagged.

If you have any questions, feel free to contact us on our matrix channel or email.

Other 196's: